Insights into the world of Cyber Attacks by Scraping Hackmageddon

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

You must have heard it on the news: “Country X accuses country Y of launching a cyber attack against its infrastructure” or “Huge leak at Corporation X, account information of millions of users leak.”

Sometimes, you don’t even need to hear it on the news, but instead it is right there, plastered all over your computer screen: “Your information has been encrypted, and the only way to recover it is to pay us.”

All of these are cyber attacks.

What is a cyber attack?

Cyber attacks are malicious Internet operations launched mostly by criminal organizations whose goal may be to steal money, financial data, intellectual property, or to simply disrupt the operations of a certain company. Countries also get involved in so-called state-sponsored cyber attacks, where they seek to learn classified information on a geopolitical rival, or simply to “send a message.”

The global cost of cyber crime for 2015 was $500 billion (BOLD NUMBER).

That’s more than 5 times Google’s yearly cash flow of 90 billion dollars.

And that number is set to grow tremendously, to around 2 trillion dollars by 2019.

In this article we want to explore the types of attacks used by cybercriminals to drive up such a huge figure and help you understand how they work and affect you.

Data Collection

I used Scrapy spider to scrape Hackmageddon. Hackmageddon is a website that has massive data on list of events of cyber attacks dating back to 2011.. Hackmageddon publishes list of attacks, which are stored in a table, every 15 days. My spider was deployed to parse through the Category and Subcategory levels of the website. However, I got to know that scrapy lacks automate web browser interaction when compared to selenium. I decided to start from the first table that was in 2011.

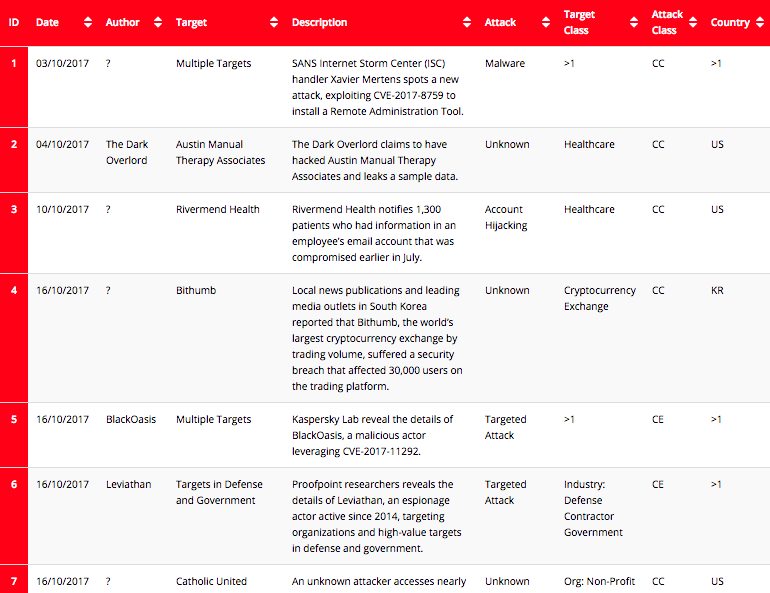

There were a few challenges in pulling the data together. One is that it doesn’t automatically advance from table to table. At the end of the page, there is a click button that leads you to next table. But after scraping the current page, I fetched that link from the next page button to get my spider to request to go to next page. Another challenge is due to Hackmageddon changing the table schema every year. In order to achieve my goals, I used control flow in my spider to deal with schema problem. I almost scraped an entire website. The information scraped included Date, Author, Target, Description, Attack, Target Class, and Country.

Data Wrangling & Visualization

Before I start analyzing data,I need to clean the data. As I expected, there was a lot of missing data. As this dataset is about cyber attacks where sometimes motive of the attack or attacker identity would be unknown, that was to be expected. I used Python's Pandas library to clean the dataset. After cleaning the dataset, I started visualizing the data. Preliminary exploration and analysis of the data revealed some interesting observations. Most attacked country is the United States followed by '>1' means more than one countries has been attacked by the same attacker, UK and India.

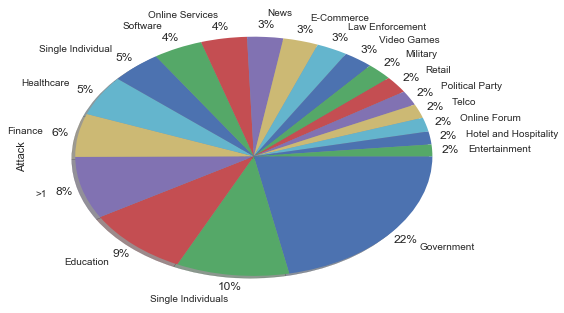

One of the things I wanted to learn in scraping Hackmageddon was what are the target of attackers and what is the motive behind the attack. The pie chart shows distribution of targets by organization type. Government and single individual are on top of the attacker's preferences, followed by education.

The next pie charts shows what is the motivation behind the attack. Cyber crime ranks on top of the Motivations Behind Attacks chart with 71%.

In Cyber Crime, the computing device is the target, typically to gain network access; crimes in which the computer is used as a weapon, for example, to launch a denial of service (DoS) attack.

For Cyber Espionage, the goal is to gain illicit access to confidential information, typically that held by a government or other organization.

In Cyber War, the goal is to disrupt the activities of a state or organization, especially the deliberate attacking of information systems for strategic or military purposes.

Hacktivism is more general. It’s defined as the practice of gaining unauthorized access to a computer system and carrying out various disruptive actions as a means of achieving political or social goals.

After knowing the motive and target class, next question arose in my mind was: what technique or attack vectors do attacker use to drive up such a huge figures? Another question I had was, who are the most notorious hackers of all time?

The answers are visualized below. This bar chart shows which techniques are employed. Account Hijackings and Targeted attacks rank on top of the Attack Vectors followed by DDos and Malware.

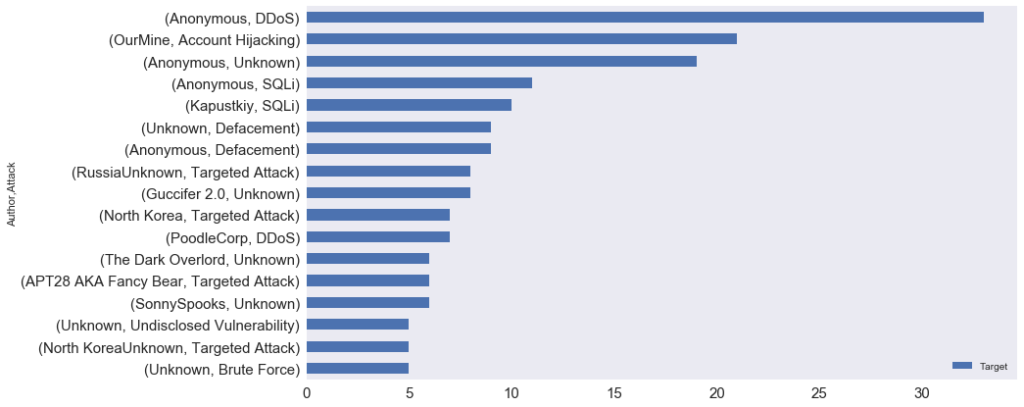

The next bar chart shows you notorious hacker of all the time. Anonymous leads the hacking race with more than 75 attacks followed by OurMine.

Anonymous: The group became known for a series of well-publicized distributed denial-of service(DDoS) attacks on government, religions, and corporate websites. They also called digital Robin Hood. In 2012, Time called Anonymous one of the "100 most influential people" in the world.

OurMine: hacking group mostly target celebrity. They hacked the Twitter accounts of Wikipedia co-founder Jimmy Wales, Pokémon Go creator John Hanke, Twitter co-founder JacK Dorsey, Google CEO Sundar Pichai, and Facebook co-founder Mark Zuckerberg.

The bar chart below shows which hacker use which technique to drive up such huge figures. Anonymous did more than 30 attacks with DDoS followed by OurMine which launched more than 20 attacks with account hijacking.

The above table shows that a hacker named Guccifer 2.0 attacked a United States' political party 9 times. Guccifer 2.0 has claimed responsibility for hacking the Democratic National Committee. North Korea mostly attacks on government of South Korea, and Eggfather targets online forums and dumps username and passwords of the users.

Natural Language Processing

This scraped dataset has lot of unstructured data. To makes sense of this unstructured data, I decided to conduct text mining on it. I used python's Scikit-learn library for machine learning and NLTK library for Natural Language Processing.

Methodology

Tokenization: Tokenization is the act of breaking up a sequence of strings into pieces, such as words, keywords, phrases, symbols and other elements called tokens. Tokens can be individual words, phrases or even whole sentences. In the process of tokenization, some characters like punctuation marks are discarded.

TF-IDF Vectorizer: TF-IDF, short for term frequency–inverse document frequency, is a numerical statistic that is intended to reflect how important a word is to a document in a collection or corpus. The tf- idf value increases proportionally to the number of times a word appears in the document, but is often offset by the frequency of the word in the corpus, which helps to adjust for the fact that some words appear more frequently in general. It will give you output as a vector.

Cosine Similarity: Cosine similarity is a measure of similarity between two non-zero vectors of an inner product space that measures the cosine of the angle between them. Two vectors with the same orientation have a cosine similarity of 1, two vectors at 90° have a similarity of 0, and two vectors diametrically opposed have a similarity of -1, independent of their magnitude. Cosine similarity is particularly used in positive space, where the outcome is neatly bounded in [0,1].

Multidimensional Scaling: the purpose of multidimensional scaling is to provide a visual representation of the pattern of proximities (i.e., similarities or distances) among a set of objects. MDS plots the attacks on a map such that those attacks that are perceived to be very similar to each other are placed near each other on the map, and those attacks that are perceived to be very different from each other are placed far away from each other on the map. It is a form of nonlinear dimensionality reduction.

K-means Clustering: k-means clustering aims to partition n observations into k clusters in which each observation belongs to the cluster with the nearest mean, serving as a prototype of the cluster. That entails clustering the data into k groups where k is predefined, selecting k points at random as cluster centers, and assigning objects to their closest cluster center according to the Euclidean distance function.

In above D3.JS interactive visualization, you can hover over to each dot, and it will pop up an organization name that has been hacked. If you scroll to the right, you will see the Legend at the bottom right. Each legend name indicate top terms that appeared most in each cluster. There are 12 clusters and each cluster has a different color.

Example: The first cluster has these terms: "dumps, passwords, usernames, records" represented in a seagreen color. So if you scrolled to extreme right, you will find the cluster in seagreen. All those organizations are similar to each other in context to description of the attacks. It means all organizations( mostly they are websites) has been hacked, and hacker dumps their username, password and records. Hence, If one organization get attacked, we can inform neighboring organization to strengthen the security or prepare for similar kind of targeted attacks.

Conclusion

According to above visualization, Government organization are most likely to fall into cluster 7,8 and 11 because governments holds so many confidential documents that hackers might wants to steal them or reveal them. Healthcare is most likely to fall in cluster 6 where hacker wants to gain access to data or customer records. Financial firms are most likely to fall into cluster 12 because of the confidential financial databases. Online gaming websites are most likely to attack by DDoS to disrupt the websites.

The most attacked organization is the government.

Guccifer 2.0 was involved with 2016 United States presidential election.

“Anonymous” performed most number of attacks.

Account Hijacking is most common technique used by hackers.

Most number of terms appears in last cluster(383): 'leaked, claims, database, anonymous' that means most of the attacks leaks information, hacked databases and anonymous did most number of attacks.